I built ac-trace to question the trust we place in passing tests

ac-trace is an early, narrow experiment in checking whether tested systems are actually defended

AI-assisted coding is making one part of software development much faster than another: it is becoming easier to generate code and tests, but not easier to know what is actually protected.

That gap worries me. A green test suite can look convincing. Coverage can look convincing too. But neither one proves that the acceptance criteria — the behaviors the system is supposed to guarantee — are truly defended. And when AI helps produce both the implementation and the tests at speed, it becomes much easier to mistake test activity for real confidence.

That is why I built ac-trace, a new open-source tool.

The problem is simple. Teams often treat these as roughly the same thing: tests are passing, code is covered, therefore the requirement is safe. But those are different signals. Code can be exercised without the important behavior being strongly checked. Tests can pass while the actual acceptance criterion is still weakly defended.

A realistic example: imagine a billing service with a rule that premium users must never be charged above their monthly cap.

You may have tests for invoice creation. You may have tests that run the premium billing path. You may even hit the exact function where the cap logic lives, so coverage looks good. But if someone removes the cap check or flips the comparison and the tests still pass, then the requirement was never really protected. The code ran. The suite stayed green. The acceptance criterion still had a confidence gap.

This becomes more dangerous with AI-assisted coding.

AI is very good at producing plausible code and plausible tests. That is useful. But it also lowers the cost of producing a large amount of evidence that looks reassuring. More tests, more mocks, more fixtures, more green pipelines — without a proportional increase in justified confidence. The faster teams generate software artifacts, the easier it becomes to confuse speed and volume with protection.

So I wanted a tool that asks a more specific question: not just whether tests exist, and not just whether code is covered, but whether the tests actually defend the acceptance criteria they are supposed to protect.

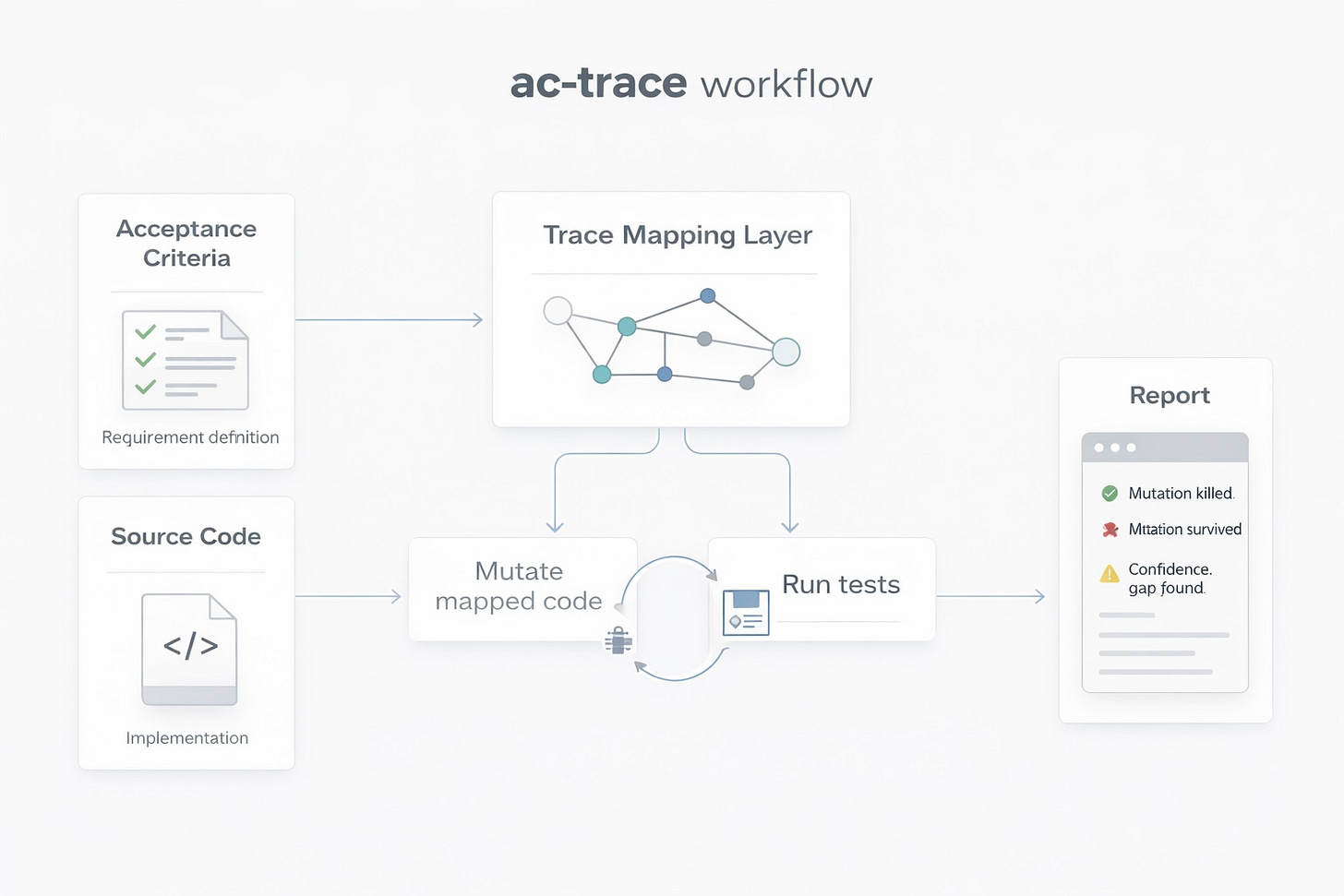

ac-trace maps acceptance criteria to code and tests, then mutates the mapped code to check whether the linked tests actually catch the breakage. In simple terms: if a requirement is really protected, then deliberately breaking the relevant implementation should cause the relevant tests to fail.

The current scope is intentionally narrow. Right now, ac-trace focuses on Python + pytest. It uses a YAML manifest, can infer links from annotated tests, and generates reports showing what was mapped and what happened when the mapped code was mutated.

This is an early experiment, not a grand claim. I am not trying to “solve testing.” I just want to make one important gap more visible: passing tests are often a weaker signal than teams think, and AI-assisted coding increases the risk of over-trusting them.

So this is the launch: ac-trace is now open source.

If this problem sounds familiar, check out the repo. Try it on a small project. Tell me where it is useful, where it is naive, and where it should go next.

This Is Amazing! Any chance I could have this approach as auto-invoked skill in my Claude Code? 😁